Deployment

Multi-node, high-availability Pigsty deployment for serious production environments.

Unlike Getting Started, production Pigsty deployments require more Architecture Planning and Preparation.

This chapter helps you understand the complete deployment process and provides best practices for production environments.

Before deploying to production, we recommend testing in Pigsty’s Sandbox to fully understand the workflow.

Use Vagrant to create a local 4-node sandbox, or leverage Terraform to provision larger simulation environments in the cloud.

For production, you typically need at least three nodes for high availability. You should understand Pigsty’s core Concepts and common administration procedures,

including Configuration, Ansible Playbooks, and Security Hardening for enterprise compliance.

1 - Install Pigsty for Production

How to install Pigsty on Linux hosts for production?

This is the Pigsty production multi-node deployment guide. For single-node Demo/Dev setups, see Getting Started.

Summary

Prepare nodes with SSH access following your architecture plan,

install a compatible Linux OS, then execute with an admin user having passwordless ssh and sudo:

curl -fsSL https://repo.pigsty.io/get | bash; # International

curl -fsSL https://repo.pigsty.cc/get | bash; # China Mirror

This runs the install script, downloading and extracting Pigsty source to your home directory with dependencies installed. Complete configuration and deployment to finish.

Before running deploy.yml for deployment, review and edit the configuration inventory: pigsty.yml.

cd ~/pigsty # Enter Pigsty directory

./configure -g # Generate config file (optional, skip if you know how to configure)

./deploy.yml # Execute deployment playbook based on generated config

After installation, access the WebUI via IP/domain + ports 80/443,

and PostgreSQL service via port 5432.

Full installation takes 3-10 minutes depending on specs/network. Offline installation significantly speeds this up; slim installation further accelerates when monitoring isn’t needed.

Video Example: 20-node Production Simulation (Ubuntu 24.04 x86_64)

Prepare

Production Pigsty deployment involves preparation work. Here’s the complete checklist:

| Item | Requirement | Item | Requirement |

|---|

| Node | At least 1C2G, no upper limit | Plan | Multiple homogeneous nodes: 2/3/4 or more |

| Disk | /data as default mount point | FS | xfs recommended; ext4/zfs as needed |

| VIP | L2 VIP, optional (unavailable in cloud) | Network | Static IPv4, single-node can use 127.0.0.1 |

| CA | Self-signed CA or specify existing certs | Domain | Local/public domain, optional, default h.pigsty |

| Kernel | Linux x86_64 / aarch64 | Linux | el8, el9, el10, d12, d13, u22, u24 |

| Locale | C.UTF-8 or C | Firewall | Ports: 80/443/22/5432 (optional) |

| User | Avoid root and postgres | Sudo | sudo privilege, preferably with nopass |

| SSH | Passwordless SSH via public key | Accessible | ssh <ip|alias> sudo ls no error |

Install

Use the following to automatically install the Pigsty source package to ~/pigsty (recommended). Deployment dependencies (Ansible) are auto-installed.

curl -fsSL https://repo.pigsty.io/get | bash # Install latest stable version

curl -fsSL https://repo.pigsty.cc/get | bash # China mirror

curl -fsSL https://repo.pigsty.io/get | bash -s v4.0.0 # Install specific version

If you prefer not to run remote scripts, manually download or clone the source. When using git, always checkout a specific version before use:

git clone https://github.com/pgsty/pigsty; cd pigsty;

git checkout v4.0.0-b4; # Always checkout a specific version when using git

For manual download/clone, additionally run bootstrap to manually install Ansible and other dependencies, or install them yourself:

./bootstrap # Install ansible for subsequent deployment

In Pigsty, deployment details are defined by the configuration inventory—the pigsty.yml config file. Customize through declarative configuration.

Pigsty provides configure as an optional configuration wizard,

generating a configuration inventory with good defaults based on your environment:

./configure -g # Use wizard to generate config with random passwords

The generated config defaults to ~/pigsty/pigsty.yml. Review and customize before installation.

Many configuration templates are available for reference. You can skip the wizard and directly edit pigsty.yml:

./configure -c ha/full -g # Use 4-node sandbox template

./configure -c ha/trio -g # Use 3-node minimal HA template

./configure -c ha/dual -g -v 17 # Use 2-node semi-HA template with PG 17

./configure -c ha/simu -s # Use 20-node production simulation, skip IP check, no random passwords

Example configure output

vagrant@meta:~/pigsty$ ./configure

configure pigsty v4.0.0 begin

[ OK ] region = china

[ OK ] kernel = Linux

[ OK ] machine = x86_64

[ OK ] package = deb,apt

[ OK ] vendor = ubuntu (Ubuntu)

[ OK ] version = 22 (22.04)

[ OK ] sudo = vagrant ok

[ OK ] ssh = vagrant@127.0.0.1 ok

[WARN] Multiple IP address candidates found:

(1) 192.168.121.38 inet 192.168.121.38/24 metric 100 brd 192.168.121.255 scope global dynamic eth0

(2) 10.10.10.10 inet 10.10.10.10/24 brd 10.10.10.255 scope global eth1

[ OK ] primary_ip = 10.10.10.10 (from demo)

[ OK ] admin = vagrant@10.10.10.10 ok

[ OK ] mode = meta (ubuntu22.04)

[ OK ] locale = C.UTF-8

[ OK ] ansible = ready

[ OK ] pigsty configured

[WARN] don't forget to check it and change passwords!

proceed with ./deploy.yml

The wizard only replaces the current node’s IP (use -s to skip replacement). For multi-node deployments, replace other node IPs manually.

Also customize the config as needed—modify default passwords, add nodes, etc.

Common configure parameters:

| Parameter | Description |

|---|

-c|--conf | Specify config template relative to conf/, without .yml suffix |

-v|--version | PostgreSQL major version: 13, 14, 15, 16, 17, 18 |

-r|--region | Upstream repo region for faster downloads: default|china|europe |

-n|--non-interactive | Use CLI params for primary IP, skip interactive wizard |

-x|--proxy | Configure proxy_env from current environment variables |

If your machine has multiple IPs, explicitly specify one with -i|--ip <ipaddr> or provide it interactively.

The script replaces IP placeholder 10.10.10.10 with the current node’s primary IPv4. Use a static IP; never use public IPs.

Generated config is at ~/pigsty/pigsty.yml. Review and modify before installation.

Change default passwords!

We strongly recommend modifying default passwords and credentials before installation. See Security Hardening.

Deploy

Pigsty’s deploy.yml playbook applies the configuration blueprint to all target nodes.

./deploy.yml # Deploy everything on all nodes at once

Example deployment output

......

TASK [pgsql : pgsql init done] *************************************************

ok: [10.10.10.11] => {

"msg": "postgres://10.10.10.11/postgres | meta | dbuser_meta dbuser_view "

}

......

TASK [pg_monitor : load grafana datasource meta] *******************************

changed: [10.10.10.11]

PLAY RECAP *********************************************************************

10.10.10.11 : ok=302 changed=232 unreachable=0 failed=0 skipped=65 rescued=0 ignored=1

localhost : ok=6 changed=3 unreachable=0 failed=0 skipped=1 rescued=0 ignored=0

When output ends with pgsql init done, PLAY RECAP, etc., installation is complete!

Upstream repo changes may cause online installation failures!

Upstream repos (Linux/PGDG) may break due to improper updates, causing deployment failures (quite common)!

For serious production deployments, we strongly recommend using verified offline packages for offline installation.

Avoid running deploy playbook repeatedly!

Warning: Running deploy.yml again on an initialized environment may restart services and overwrite configs. Be careful!

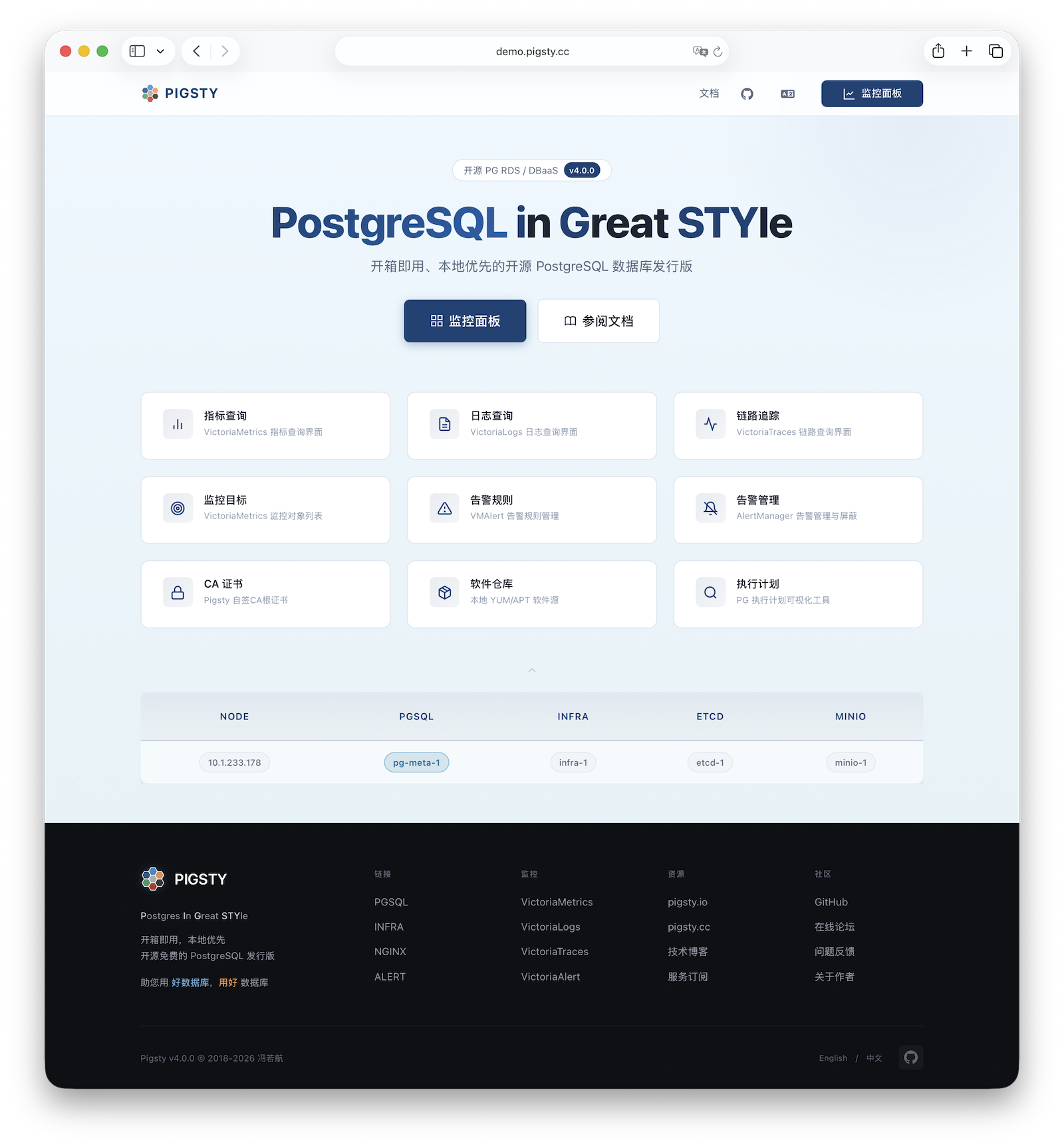

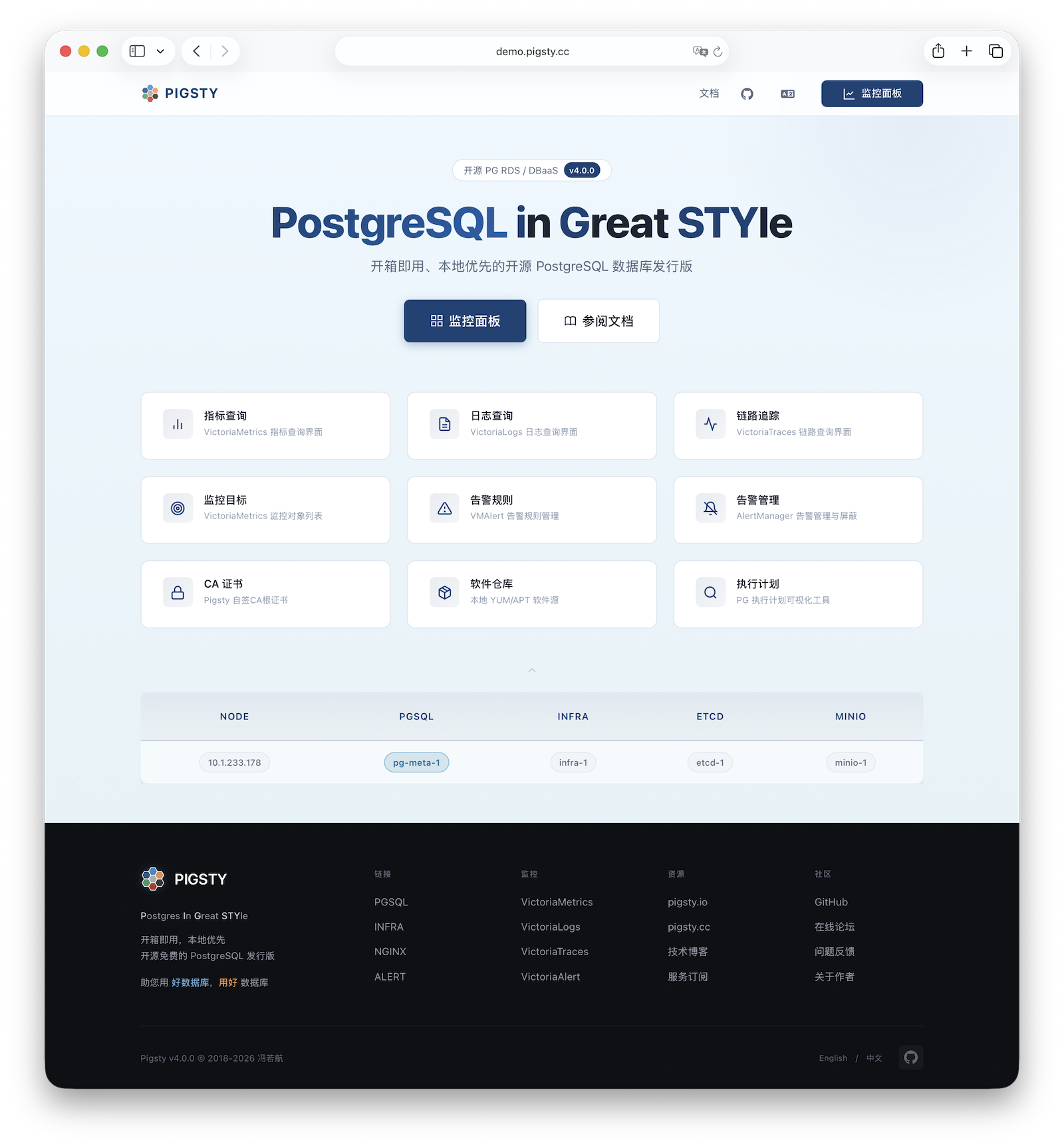

Interface

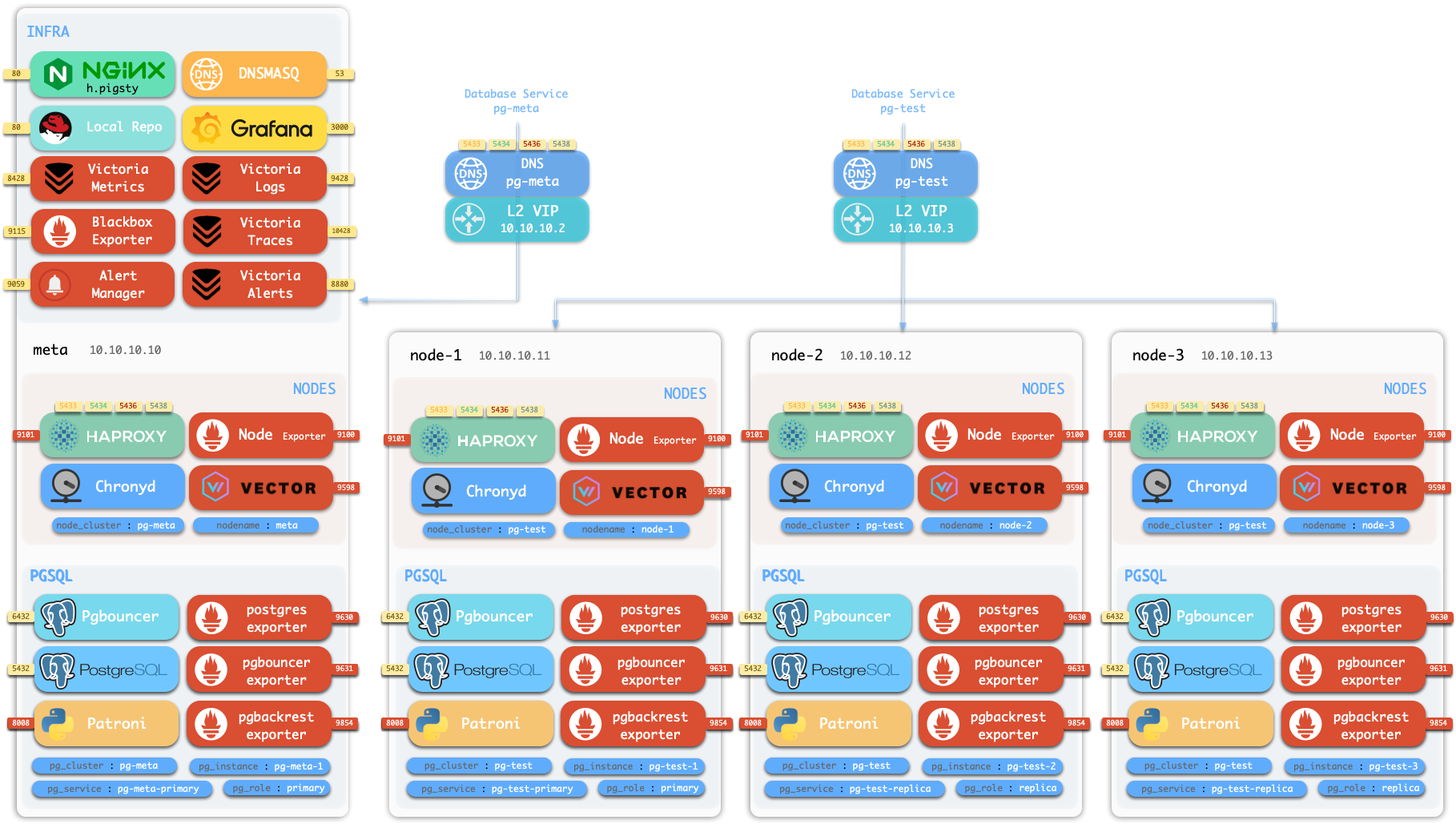

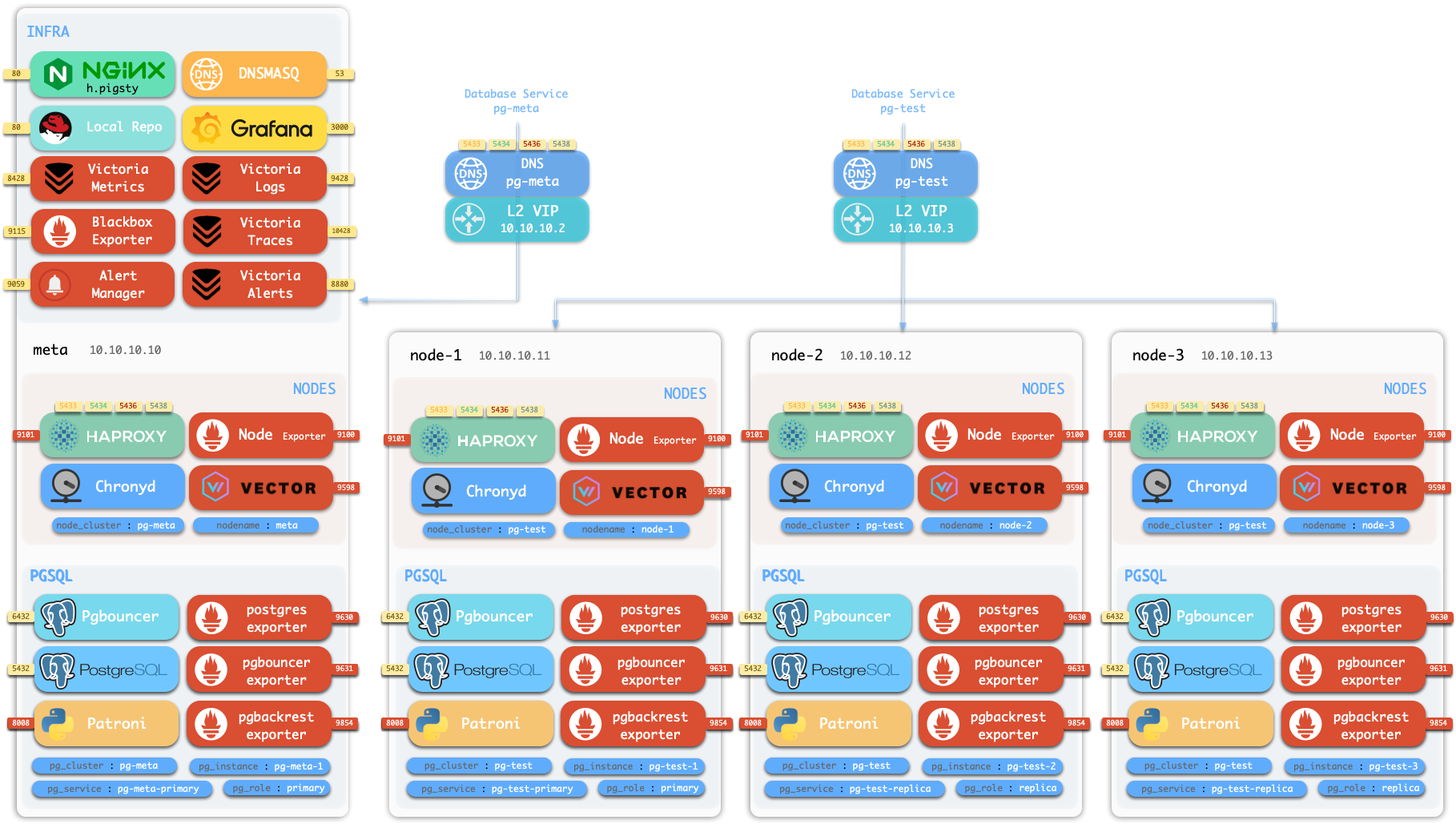

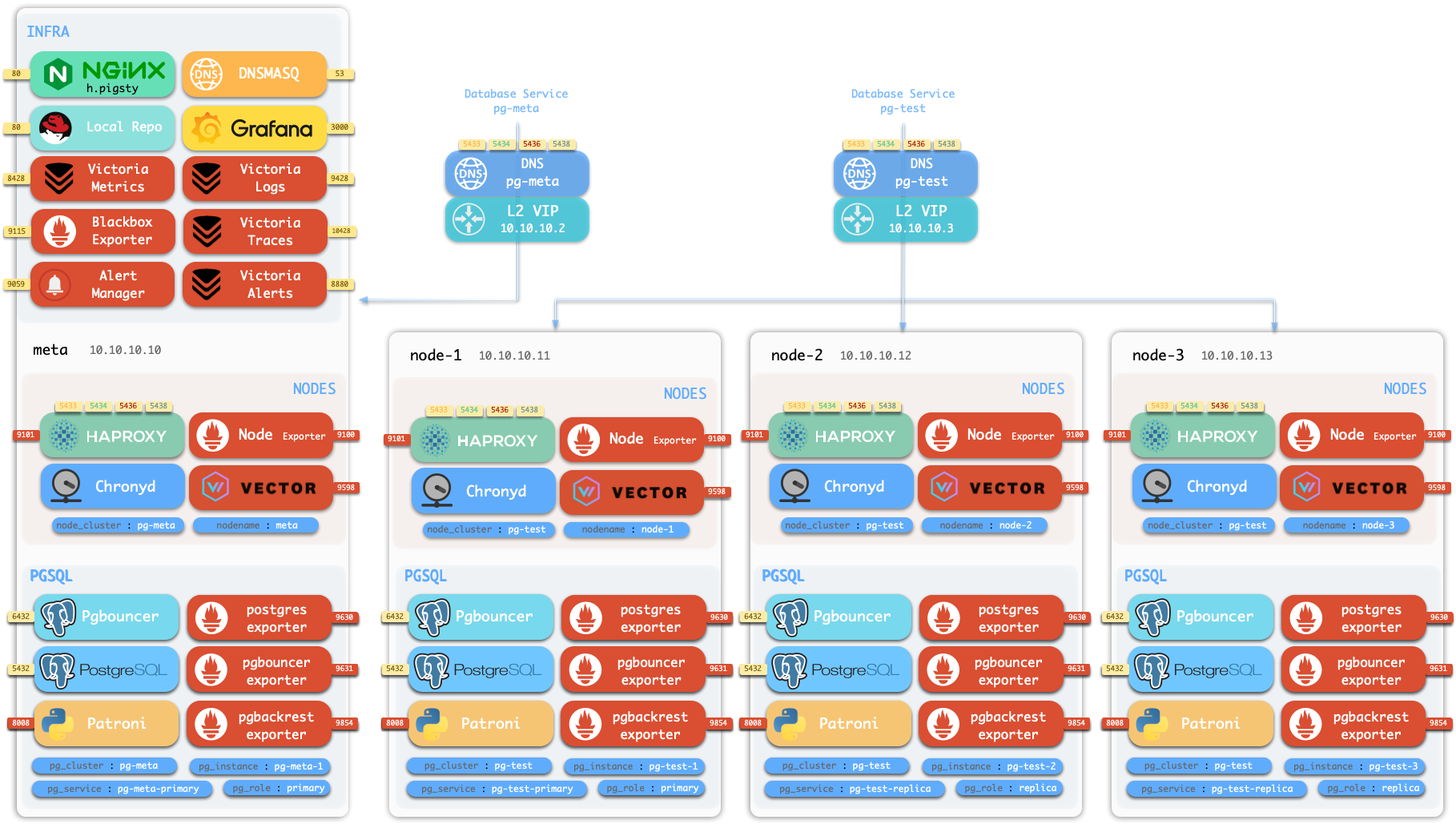

Assuming the 4-node deployment template, your Pigsty environment should have a structure like:

| ID | NODE | PGSQL | INFRA | ETCD |

|---|

| 1 | 10.10.10.10 | pg-meta-1 | infra-1 | etcd-1 |

| 2 | 10.10.10.11 | pg-test-1 | - | - |

| 3 | 10.10.10.12 | pg-test-2 | - | - |

| 4 | 10.10.10.13 | pg-test-3 | - | - |

The INFRA module provides a graphical management interface via browser, accessible through Nginx’s 80/443 ports.

The PGSQL module provides a PostgreSQL database server on port 5432, also accessible via Pgbouncer/HAProxy proxies.

For production multi-node HA PostgreSQL clusters, use service access for automatic traffic routing.

More

After installation, explore the WebUI and access PostgreSQL service via port 5432.

Deploy and monitor more clusters—add definitions to the configuration inventory and run:

bin/node-add pg-test # Add pg-test cluster's 3 nodes to Pigsty management

bin/pgsql-add pg-test # Initialize a 3-node pg-test HA PG cluster

bin/redis-add redis-ms # Initialize Redis cluster: redis-ms

Most modules require the NODE module first. See available modules:

PGSQL, INFRA, NODE, ETCD,

MINIO, REDIS, FERRET, DOCKER…

2 - Prepare Resources for Serious Deployment

Production deployment preparation including hardware, nodes, disks, network, VIP, domain, software, and filesystem requirements.

Pigsty runs on nodes (physical machines or VMs). This document covers the planning and preparation required for deployment.

Node

Pigsty currently runs on Linux kernel with x86_64 / aarch64 architecture.

A “node” refers to an SSH accessible resource that provides a bare Linux OS environment.

It can be a physical machine, virtual machine, or a systemd-enabled container equipped with systemd, sudo, and sshd.

Deploying Pigsty requires at least 1 node. You can prepare more and deploy everything in one pass via playbooks, or add nodes later.

The minimum spec requirement is 1C1G, but at least 1C2G is recommended. Higher is better—no upper limit. Parameters are auto-tuned based on available resources.

The number of nodes you need depends on your requirements. See Architecture Planning for details.

Although a single-node deployment with external backup provides reasonable recovery guarantees,

we recommend multiple nodes for production. A functioning HA setup requires at least 3 nodes; 2 nodes provide Semi-HA.

Disk

Pigsty uses /data as the default data directory. If you have a dedicated data disk, mount it there.

Use /data1, /data2, /dataN for additional disk drives.

To use a different data directory, configure these parameters:

Filesystem

You can use any supported Linux filesystem for data disks. For production, we recommend xfs.

xfs is a Linux standard with excellent performance and CoW capabilities for instant large database cluster cloning. MinIO requires xfs.

ext4 is another viable option with a richer data recovery tool ecosystem, but lacks CoW.

zfs provides RAID and snapshot features but with significant performance overhead and requires separate installation.

Choose among these three based on your needs. Avoid NFS for database services.

Pigsty assumes /data is owned by root:root with 755 permissions.

Admins can assign ownership for first-level directories; each application runs with a dedicated user in its subdirectory.

See FHS for the directory structure reference.

Network

Pigsty defaults to online installation mode, requiring outbound Internet access.

Offline installation eliminates the Internet requirement.

Internally, Pigsty requires a static network. Assign a fixed IPv4 address to each node.

The IP address serves as the node’s unique identifier—the primary IP bound to the main network interface for internal communications.

For single-node deployment without a fixed IP, use the loopback address 127.0.0.1 as a workaround.

Never use Public IP as identifier

Using public IP addresses as node identifiers can cause security and connectivity issues. Always use internal IP addresses.

VIP

Pigsty supports optional L2 VIP for NODE clusters (keepalived) and PGSQL clusters (vip-manager).

To use L2 VIP, you must explicitly assign an L2 VIP address for each node/database cluster.

This is straightforward on your own hardware but may be challenging in public cloud environments.

L2 VIP requires L2 Networking

To use optional Node VIP and PG VIP features, ensure all nodes are on the same L2 network.

CA

Pigsty generates a self-signed CA infrastructure for each deployment, issuing all encryption certificates.

If you have an existing enterprise CA or self-signed CA, you can use it to issue the certificates Pigsty requires.

Domain

Pigsty uses a local static domain i.pigsty by default for WebUI access. This is optional—IP addresses work too.

For production, domain names are recommended to enable HTTPS and encrypted data transmission.

Domains also allow multiple services on the same port, differentiated by domain name.

For Internet-facing deployments, use public DNS providers (Cloudflare, AWS Route53, etc.) to manage resolution.

Point your domain to the Pigsty node’s public IP address.

For LAN/office network deployments, use internal DNS servers with the node’s internal IP address.

For local-only access, add the following to /etc/hosts on machines accessing the Pigsty WebUI:

10.10.10.10 i.pigsty # Replace with your domain and Pigsty node IP

Linux

Pigsty runs on Linux. It supports 14 mainstream distributions: Compatible OS List

We recommend RockyLinux 10.0, Debian 13.2, or Ubuntu 24.04.2 as default options.

On macOS and Windows, use VM software or Docker systemd images to run Pigsty.

We strongly recommend a fresh OS installation. If your server already runs Nginx, PostgreSQL, or similar services, consider deploying on new nodes.

Use the same OS version on all nodes

For multi-node deployments, ensure all nodes use the same Linux distribution, architecture, and version. Heterogeneous deployments may work but are unsupported and may cause unpredictable issues.

Locale

We recommend setting en_US as the primary OS language, or at minimum ensuring this locale is available, so PostgreSQL logs are in English.

Some distributions (e.g., Debian) may not provide the en_US locale by default. Enable it with:

localedef -i en_US -f UTF-8 en_US.UTF-8

localectl set-locale LANG=en_US.UTF-8

For PostgreSQL, we strongly recommend using the built-in C.UTF-8 collation (PG 17+) as the default.

The configuration wizard automatically sets C.UTF-8 as the collation when PG version and OS support are detected.

Ansible

Pigsty uses Ansible to control all managed nodes from the admin node.

See Installing Ansible for details.

Pigsty installs Ansible on Infra nodes by default, making them usable as admin nodes (or backup admin nodes).

For single-node deployment, the installation node serves as both the admin node running Ansible and the INFRA node hosting infrastructure.

Pigsty

You can install the latest stable Pigsty source with:

curl -fsSL https://repo.pigsty.io/get | bash; # International

curl -fsSL https://repo.pigsty.cc/get | bash; # China Mirror

To install a specific version, use the -s <version> parameter:

curl -fsSL https://repo.pigsty.io/get | bash -s v4.0.0

curl -fsSL https://repo.pigsty.cc/get | bash -s v4.0.0

To install the latest beta version:

curl -fsSL https://repo.pigsty.io/beta | bash;

curl -fsSL https://repo.pigsty.cc/beta | bash;

For developers or the latest development version, clone the repository directly:

git clone https://github.com/pgsty/pigsty.git;

cd pigsty; git checkout v4.0.0

If your environment lacks Internet access, download the source tarball from GitHub Releases or the Pigsty repository:

wget https://repo.pigsty.io/src/pigsty-v4.0.0.tgz

wget https://repo.pigsty.cc/src/pigsty-v4.0.0.tgz

3 - Planning Architecture and Nodes

How many nodes? Which modules need HA? How to plan based on available resources and requirements?

Pigsty uses a modular architecture. You can combine modules like building blocks and express your intent through declarative configuration.

Common Patterns

Here are common deployment patterns for reference. Customize based on your requirements:

| Multi-node Pattern | INFRA | ETCD | PGSQL | MINIO | Description |

|---|

Two-node (dual) | 1 | 1 | 2 | | Semi-HA, tolerates specific node failure |

Three-node (trio) | 3 | 3 | 3 | | Standard HA, tolerates any one failure |

Four-node (full) | 1 | 1 | 1+3 | | Demo setup, single INFRA/ETCD |

Production (simu) | 2 | 3 | n | n | 2 INFRA, 3 ETCD |

| Large-scale (custom) | 3 | 5 | n | n | 3 INFRA, 5 ETCD |

Your architecture choice depends on reliability requirements and available resources.

Serious production deployments require at least 3 nodes for HA configuration.

With only 2 nodes, use Semi-HA configuration.

Trade-offs

- Pigsty monitoring requires at least 1 INFRA node. Production typically uses 2; large-scale deployments use 3.

- PostgreSQL HA requires at least 1 ETCD node. Production typically uses 3; large-scale uses 5. Must be odd numbers.

- Object storage (MinIO) requires at least 1 MINIO node. Production typically uses 4+ nodes in MNMD clusters.

- Production PG clusters typically use at least two-node primary-replica configuration; serious deployments use 3 nodes; high read loads can have dozens of replicas.

- For PostgreSQL, you can also use advanced configurations: offline instances, sync instances, standby clusters, delayed clusters, etc.

Single-Node Setup

The simplest configuration with everything on a single node. Installs four essential modules by default. Typically used for demos, devbox, or testing.

With an external S3/MinIO backup repository providing RTO/RPO guarantees, this configuration works for standard production environments.

Single-node variants:

- Rich (

rich): Production single-node template with local MinIO object storage, local software repo, and all PG extensions. - Slim (

slim): Installs only PGSQL and ETCD, no monitoring infra. Slim installation can expand to multi-node HA deployment. - Infra-only (

infra): Opposite of slim—installs only INFRA monitoring infrastructure, no database services, for monitoring other instances. - Alternative kernels: Replace vanilla PG with derivatives:

pgsql, citus, mssql, polar, ivory, mysql, pgtde, oriole, supabase.

Two-Node Setup

Two-node configuration enables database replication and Semi-HA capability with better data redundancy and limited failover support:

Two-node HA auto-failover has limitations. This “Semi-HA” setup only auto-recovers from specific node failures:

- If

node-1 fails: No automatic failover—requires manual promotion of node-2 - If

node-2 fails: Automatic failover works—node-1 auto-promoted

Three-Node Setup

Three-node template provides true baseline HA configuration, tolerating any single node failure with automatic recovery.

| ID | NODE | PGSQL | INFRA | ETCD |

|---|

| 1 | node-1 | pg-meta-1 | infra-1 | etcd-1 |

| 2 | node-2 | pg-meta-2 | infra-2 | etcd-2 |

| 3 | node-3 | pg-meta-3 | infra-3 | etcd-3 |

Four-Node Setup

Pigsty Sandbox uses the standard four-node configuration.

| ID | NODE | PGSQL | INFRA | ETCD |

|---|

| 1 | node-1 | pg-meta-1 | infra-1 | etcd-1 |

| 2 | node-2 | pg-test-1 | | |

| 3 | node-3 | pg-test-2 | | |

| 4 | node-4 | pg-test-3 | | |

For demo purposes, INFRA / ETCD modules aren’t configured for HA. You can adjust further:

| ID | NODE | PGSQL | INFRA | ETCD | MINIO |

|---|

| 1 | node-1 | pg-meta-1 | infra-1 | etcd-1 | minio-1 |

| 2 | node-2 | pg-test-1 | infra-2 | etcd-2 | |

| 3 | node-3 | pg-test-2 | | etcd-3 | |

| 4 | node-4 | pg-test-3 | | | |

More Nodes

With proper virtualization infrastructure or abundant resources, you can use more nodes for dedicated deployment of each module, achieving optimal reliability, observability, and performance.

| ID | NODE | INFRA | ETCD | MINIO | PGSQL |

|---|

| 1 | 10.10.10.10 | infra-1 | | | pg-meta-1 |

| 2 | 10.10.10.11 | infra-2 | | | pg-meta-2 |

| 3 | 10.10.10.21 | | etcd-1 | | |

| 4 | 10.10.10.22 | | etcd-2 | | |

| 5 | 10.10.10.23 | | etcd-3 | | |

| 6 | 10.10.10.31 | | | minio-1 | |

| 7 | 10.10.10.32 | | | minio-2 | |

| 8 | 10.10.10.33 | | | minio-3 | |

| 9 | 10.10.10.34 | | | minio-4 | |

| 10 | 10.10.10.40 | | | | pg-src-1 |

| 11 | 10.10.10.41 | | | | pg-src-2 |

| 12 | 10.10.10.42 | | | | pg-src-3 |

| 13 | 10.10.10.50 | | | | pg-test-1 |

| 14 | 10.10.10.51 | | | | pg-test-2 |

| 15 | 10.10.10.52 | | | | pg-test-3 |

| 16 | …… | | | | |

4 - Setup Admin User and Privileges

Admin user, sudo, SSH, accessibility verification, and firewall configuration

Pigsty requires an OS admin user with passwordless SSH and Sudo privileges on all managed nodes.

This user must be able to SSH to all managed nodes and execute sudo commands on them.

User

Typically use names like dba or admin, avoiding root and postgres:

- Using

root for deployment is possible but not a production best practice. - Using

postgres (pg_dbsu) as admin user is strictly prohibited.

Passwordless

The passwordless requirement is optional if you can accept entering a password for every ssh and sudo command.

Use -k|--ask-pass when running playbooks to prompt for SSH password,

and -K|--ask-become-pass to prompt for sudo password.

Some enterprise security policies may prohibit passwordless ssh or sudo. In such cases, use the options above,

or consider configuring a sudoers rule with a longer password cache time to reduce password prompts.

Create Admin User

Typically, your server/VM provider creates an initial admin user.

If unsatisfied with that user, Pigsty’s deployment playbook can create a new admin user for you.

Assuming you have root access or an existing admin user on the node, create an admin user with Pigsty itself:

./node.yml -k -K -t node_admin -e ansible_user=[your_existing_admin]

This leverages the existing admin to create a new one—a dedicated dba (uid=88) user described by these parameters, with sudo/ssh properly configured:

Sudo

All admin users should have sudo privileges on all managed nodes, preferably with passwordless execution.

To configure an admin user with passwordless sudo from scratch, edit/create a sudoers file (assuming username vagrant):

echo '%vagrant ALL=(ALL) NOPASSWD: ALL' | sudo tee /etc/sudoers.d/vagrant

For admin user dba, the /etc/sudoers.d/dba content should be:

%dba ALL=(ALL) NOPASSWD: ALL

If your security policy prohibits passwordless sudo, remove the NOPASSWD: part:

Ansible relies on sudo to execute commands with root privileges on managed nodes.

In environments where sudo is unavailable (e.g., inside Docker containers), install sudo first.

SSH

Your current user should have passwordless SSH access to all managed nodes as the corresponding admin user.

Your current user can be the admin user itself, but this isn’t required—as long as you can SSH as the admin user.

SSH configuration is Linux 101, but here are the basics:

Generate SSH Key

If you don’t have an SSH key pair, generate one:

ssh-keygen -t rsa -b 2048 -N '' -f ~/.ssh/id_rsa -q

Pigsty will do this for you during the bootstrap stage if you lack a key pair.

Copy SSH Key

Distribute your generated public key to remote (and local) servers, placing it in the admin user’s ~/.ssh/authorized_keys file on all nodes.

Use the ssh-copy-id utility:

ssh-copy-id <ip> # Interactive password entry

sshpass -p <password> ssh-copy-id <ip> # Non-interactive (use with caution)

Using Alias

When direct SSH access is unavailable (jumpserver, non-standard port, different credentials), configure SSH aliases in ~/.ssh/config:

Host meta

HostName 10.10.10.10

User dba # Different user on remote

IdentityFile /etc/dba/id_rsa # Non-standard key

Port 24 # Non-standard port

Reference the alias in the inventory using ansible_host for the real SSH alias:

nodes:

hosts: # If node `10.10.10.10` requires SSH alias `meta`

10.10.10.10: { ansible_host: meta } # Access via `ssh meta`

SSH parameters work directly in Ansible. See Ansible Inventory Guide for details.

This technique enables accessing nodes in private networks via jumpservers, or using different ports and credentials,

or using your local laptop as an admin node.

Check Accessibility

You should be able to passwordlessly ssh from the admin node to all managed nodes as your current user.

The remote user (admin user) should have privileges to run passwordless sudo commands.

To verify passwordless ssh/sudo works, run this command on the admin node for all managed nodes:

If there’s no password prompt or error, passwordless ssh/sudo is working as expected.

Firewall

Production deployments typically require firewall configuration to block unauthorized port access.

By default, block inbound access from office/Internet networks except:

- SSH port

22 for node access - HTTP (

80) / HTTPS (443) for WebUI services - PostgreSQL port

5432 for database access

If accessing PostgreSQL via other ports, allow them accordingly.

See used ports for the complete port list.

5432: PostgreSQL database6432: Pgbouncer connection pooler5433: PG primary service5434: PG replica service5436: PG default service5438: PG offline service

5 - Sandbox

4-node sandbox environment for learning, testing, and demonstration

Pigsty provides a standard 4-node sandbox environment for learning, testing, and feature demonstration.

The sandbox uses fixed IP addresses and predefined identity identifiers, making it easy to reproduce various demo use cases.

Description

The default sandbox environment consists of 4 nodes, using the ha/full.yml configuration template.

| ID | IP Address | Node | PostgreSQL | INFRA | ETCD | MINIO |

|---|

| 1 | 10.10.10.10 | meta | pg-meta-1 | infra-1 | etcd-1 | minio-1 |

| 2 | 10.10.10.11 | node-1 | pg-test-1 | | | |

| 3 | 10.10.10.12 | node-2 | pg-test-2 | | | |

| 4 | 10.10.10.13 | node-3 | pg-test-3 | | | |

The sandbox configuration can be summarized as the following config:

all:

children:

infra: { hosts: { 10.10.10.10: { infra_seq: 1 } } }

etcd: { hosts: { 10.10.10.10: { etcd_seq: 1 } }, vars: { etcd_cluster: etcd } }

minio: { hosts: { 10.10.10.10: { minio_seq: 1 } }, vars: { minio_cluster: minio } }

pg-meta:

hosts: { 10.10.10.10: { pg_seq: 1, pg_role: primary } }

vars: { pg_cluster: pg-meta }

pg-test:

hosts:

10.10.10.11: { pg_seq: 1, pg_role: primary }

10.10.10.12: { pg_seq: 2, pg_role: replica }

10.10.10.13: { pg_seq: 3, pg_role: replica }

vars: { pg_cluster: pg-test }

vars:

version: v4.0.0

admin_ip: 10.10.10.10

region: default

pg_version: 18

PostgreSQL Clusters

The sandbox comes with a single-instance PostgreSQL cluster pg-meta on the meta node:

10.10.10.10 meta pg-meta-1

10.10.10.2 pg-meta # Optional L2 VIP

There’s also a 3-instance PostgreSQL HA cluster pg-test deployed on the other three nodes:

10.10.10.11 node-1 pg-test-1

10.10.10.12 node-2 pg-test-2

10.10.10.13 node-3 pg-test-3

10.10.10.3 pg-test # Optional L2 VIP

Two optional L2 VIPs are bound to the primary instances of pg-meta and pg-test clusters respectively.

Infrastructure

The meta node also hosts:

- ETCD cluster: Single-node

etcd cluster providing DCS service for PostgreSQL HA - MinIO cluster: Single-node

minio cluster providing S3-compatible object storage

10.10.10.10 etcd-1

10.10.10.10 minio-1

Creating Sandbox

Pigsty provides out-of-the-box templates. You can use Vagrant to create a local sandbox, or use Terraform to create a cloud sandbox.

Local Sandbox (Vagrant)

Local sandbox uses VirtualBox/libvirt to create local virtual machines, running free on your Mac / PC.

To run the full 4-node sandbox, your machine should have at least 4 CPU cores and 8GB memory.

cd ~/pigsty

make full # Create 4-node sandbox with default RockyLinux 9 image

make full9 # Create 4-node sandbox with RockyLinux 9

make full12 # Create 4-node sandbox with Debian 12

make full24 # Create 4-node sandbox with Ubuntu 24.04

For more details, please refer to Vagrant documentation.

Cloud sandbox uses public cloud API to create virtual machines. Easy to create and destroy, pay-as-you-go, ideal for quick testing.

Use spec/aliyun-full.tf template to create a 4-node sandbox on Alibaba Cloud:

cd ~/pigsty/terraform

cp spec/aliyun-full.tf terraform.tf

terraform init

terraform apply

For more details, please refer to Terraform documentation.

Other Specs

Besides the standard 4-node sandbox, Pigsty also provides other environment specs:

The simplest 1-node environment for quick start, development, and testing:

make meta # Create single-node devbox

Two Node Environment (dual)

2-node environment for testing primary-replica replication:

make dual # Create 2-node environment

Three Node Environment (trio)

3-node environment for testing basic high availability:

make trio # Create 3-node environment

Production Simulation (simu)

20-node large simulation environment for full production environment testing:

make simu # Create 20-node production simulation environment

This environment includes:

- 3 infrastructure nodes (

meta1, meta2, meta3) - 2 HAProxy proxy nodes

- 4 MinIO nodes

- 5 ETCD nodes

- 6 PostgreSQL nodes (2 clusters, 3 nodes each)

6 - Vagrant

Create local virtual machine environment with Vagrant

Vagrant is a popular local virtualization tool that creates local virtual machines in a declarative manner.

Pigsty requires a Linux environment to run. You can use Vagrant to easily create Linux virtual machines locally for testing.

Quick Start

Install Dependencies

First, ensure you have Vagrant and a virtual machine provider (such as VirtualBox or libvirt) installed on your system.

On macOS, you can use Homebrew for one-click installation:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

brew install vagrant virtualbox ansible

VirtualBox requires reboot after installation

After installing VirtualBox, you need to restart your system and allow its kernel extensions in System Preferences.

On Linux, you can use VirtualBox or vagrant-libvirt as the VM provider.

Create Virtual Machines

Use the Pigsty-provided make shortcuts to create virtual machines:

cd ~/pigsty

make meta # 1 node devbox for quick start, development, and testing

make full # 4 node sandbox for HA testing and feature demonstration

make simu # 20 node simubox for production environment simulation

# Other less common specs

make dual # 2 node environment

make trio # 3 node environment

make deci # 10 node environment

You can use variant aliases to specify different operating system images:

make meta9 # Create single node with RockyLinux 9

make full12 # Create 4-node sandbox with Debian 12

make simu24 # Create 20-node simubox with Ubuntu 24.04

Available OS suffixes: 7 (EL7), 8 (EL8), 9 (EL9), 10 (EL10), 11 (Debian 11), 12 (Debian 12), 13 (Debian 13), 20 (Ubuntu 20.04), 22 (Ubuntu 22.04), 24 (Ubuntu 24.04)

Build Environment

You can also use the following aliases to create Pigsty build environments. These templates won’t replace the base image:

make oss # 3 node OSS build environment

make pro # 5 node PRO build environment

make rpm # 3 node EL7/8/9 build environment

make deb # 5 node Debian11/12 Ubuntu20/22/24 build environment

make all # 7 node full build environment

Spec Templates

Pigsty provides multiple predefined VM specs in the vagrant/spec/ directory:

| Template | Nodes | Spec | Description | Alias |

|---|

| meta.rb | 1 node | 2c4g x 1 | Single-node devbox | Devbox |

| dual.rb | 2 nodes | 1c2g x 2 | Two-node environment | |

| trio.rb | 3 nodes | 1c2g x 3 | Three-node environment | |

| full.rb | 4 nodes | 2c4g + 1c2g x 3 | 4-node full sandbox | Sandbox |

| deci.rb | 10 nodes | Mixed | 10-node environment | |

| simu.rb | 20 nodes | Mixed | 20-node production simubox | Simubox |

| minio.rb | 4 nodes | 1c2g x 4 + disk | MinIO test environment | |

| oss.rb | 3 nodes | 1c2g x 3 | 3-node OSS build environment | |

| pro.rb | 5 nodes | 1c2g x 5 | 5-node PRO build environment | |

| rpm.rb | 3 nodes | 1c2g x 3 | 3-node EL build environment | |

| deb.rb | 5 nodes | 1c2g x 5 | 5-node Deb build environment | |

| all.rb | 7 nodes | 1c2g x 7 | 7-node full build environment | |

Each spec file contains a Specs variable describing the VM nodes. For example, full.rb contains the 4-node sandbox definition:

# full: pigsty full-featured 4-node sandbox for HA-testing & tutorial & practices

Specs = [

{ "name" => "meta" , "ip" => "10.10.10.10" , "cpu" => "2" , "mem" => "4096" , "image" => "bento/rockylinux-9" },

{ "name" => "node-1" , "ip" => "10.10.10.11" , "cpu" => "1" , "mem" => "2048" , "image" => "bento/rockylinux-9" },

{ "name" => "node-2" , "ip" => "10.10.10.12" , "cpu" => "1" , "mem" => "2048" , "image" => "bento/rockylinux-9" },

{ "name" => "node-3" , "ip" => "10.10.10.13" , "cpu" => "1" , "mem" => "2048" , "image" => "bento/rockylinux-9" },

]

simu Spec Details

simu.rb provides a 20-node production environment simulation configuration:

- 3 x infra nodes (

meta1-3): 4c16g - 2 x haproxy nodes (

proxy1-2): 1c2g - 4 x minio nodes (

minio1-4): 1c2g - 5 x etcd nodes (

etcd1-5): 1c2g - 6 x pgsql nodes (

pg-src-1-3, pg-dst-1-3): 2c4g

Config Script

Use the vagrant/config script to generate the final Vagrantfile based on spec and options:

cd ~/pigsty

vagrant/config [spec] [image] [scale] [provider]

# Examples

vagrant/config meta # Use 1-node spec with default EL9 image

vagrant/config dual el9 # Use 2-node spec with EL9 image

vagrant/config trio d12 2 # Use 3-node spec with Debian 12, double resources

vagrant/config full u22 4 # Use 4-node spec with Ubuntu 22, 4x resources

vagrant/config simu u24 1 libvirt # Use 20-node spec with Ubuntu 24, libvirt provider

Image Aliases

The config script supports various image aliases:

| Distro | Alias | Vagrant Box |

|---|

| CentOS 7 | el7, 7, centos | generic/centos7 |

| Rocky 8 | el8, 8, rocky8 | bento/rockylinux-9 |

| Rocky 9 | el9, 9, rocky9, el | bento/rockylinux-9 |

| Rocky 10 | el10, rocky10 | rockylinux/10 |

| Debian 11 | d11, 11, debian11 | generic/debian11 |

| Debian 12 | d12, 12, debian12 | generic/debian12 |

| Debian 13 | d13, 13, debian13 | cloud-image/debian-13 |

| Ubuntu 20.04 | u20, 20, ubuntu20 | generic/ubuntu2004 |

| Ubuntu 22.04 | u22, 22, ubuntu22, ubuntu | generic/ubuntu2204 |

| Ubuntu 24.04 | u24, 24, ubuntu24 | bento/ubuntu-24.04 |

Resource Scaling

You can use the VM_SCALE environment variable to adjust the resource multiplier (default is 1):

VM_SCALE=2 vagrant/config meta # Double the CPU/memory resources for meta spec

For example, using VM_SCALE=4 with the meta spec will adjust the default 2c4g to 8c16g:

Specs = [

{ "name" => "meta" , "ip" => "10.10.10.10", "cpu" => "8" , "mem" => "16384" , "image" => "bento/rockylinux-9" },

]

simu spec doesn't support scaling

The simu spec doesn’t support resource scaling. The scale parameter will be automatically ignored because its resource configuration is already optimized for simulation scenarios.

VM Management

Pigsty provides a set of Makefile shortcuts for managing virtual machines:

make # Equivalent to make start

make new # Destroy existing VMs and create new ones

make ssh # Write VM SSH config to ~/.ssh/ (must run after creation)

make dns # Write VM DNS records to /etc/hosts (optional)

make start # Start VMs and configure SSH (up + ssh)

make up # Start VMs with vagrant up

make halt # Shutdown VMs (alias: down, dw)

make clean # Destroy VMs (alias: del, destroy)

make status # Show VM status (alias: st)

make pause # Pause VMs (alias: suspend)

make resume # Resume VMs

make nuke # Destroy all VMs and volumes with virsh (libvirt only)

make info # Show libvirt info (VMs, networks, storage volumes)

SSH Keys

Pigsty Vagrant templates use your ~/.ssh/id_rsa[.pub] as the SSH key for VMs by default.

Before starting, ensure you have a valid SSH key pair. If not, generate one with:

ssh-keygen -t rsa -b 2048 -N '' -f ~/.ssh/id_rsa -q

Supported Images

Pigsty currently uses the following Vagrant Boxes for testing:

# x86_64 / amd64

el8 : bento/rockylinux-8 (libvirt, 202502.21.0, (amd64))

el9 : bento/rockylinux-9 (libvirt, 202502.21.0, (amd64))

el10: rockylinux/10 (libvirt)

d11 : generic/debian11 (libvirt, 4.3.12, (amd64))

d12 : generic/debian12 (libvirt, 4.3.12, (amd64))

d13 : cloud-image/debian-13 (libvirt)

u20 : generic/ubuntu2004 (libvirt, 4.3.12, (amd64))

u22 : generic/ubuntu2204 (libvirt, 4.3.12, (amd64))

u24 : bento/ubuntu-24.04 (libvirt, 20250316.0.0, (amd64))

For Apple Silicon (aarch64) architecture, fewer images are available:

# aarch64 / arm64

bento/rockylinux-9 (virtualbox, 202502.21.0, (arm64))

bento/ubuntu-24.04 (virtualbox, 202502.21.0, (arm64))

You can find more available Box images on Vagrant Cloud.

Environment Variables

You can use the following environment variables to control Vagrant behavior:

export VM_SPEC='meta' # Spec name

export VM_IMAGE='bento/rockylinux-9' # Image name

export VM_SCALE='1' # Resource scaling multiplier

export VM_PROVIDER='virtualbox' # Virtualization provider

export VAGRANT_EXPERIMENTAL=disks # Enable experimental disk features

Notes

VirtualBox Network Configuration

When using older versions of VirtualBox as Vagrant provider, additional configuration is required to use 10.x.x.x CIDR as Host-Only network:

echo "* 10.0.0.0/8" | sudo tee -a /etc/vbox/networks.conf

First-time image download is slow

The first time you use Vagrant to start a specific operating system, it will download the corresponding Box image file (typically 1-2 GB). After download, the image is cached and reused for subsequent VM creation.

libvirt Provider

If you’re using libvirt as the provider, you can use make info to view VMs, networks, and storage volume information, and make nuke to forcefully destroy all related resources.

7 - Terraform

Create virtual machine environment on public cloud with Terraform

Terraform is a popular “Infrastructure as Code” tool that you can use to create virtual machines on public clouds with one click.

Pigsty provides Terraform templates for Alibaba Cloud, AWS, and Tencent Cloud as examples.

Quick Start

On macOS, you can use Homebrew to install Terraform:

For other platforms, refer to the Terraform Official Installation Guide.

Initialize and Apply

Enter the Terraform directory, select a template, initialize provider plugins, and apply the configuration:

cd ~/pigsty/terraform

cp spec/aliyun-meta.tf terraform.tf # Select template

terraform init # Install cloud provider plugins (first use)

terraform apply # Generate execution plan and create resources

After running the apply command, type yes to confirm when prompted. Terraform will create VMs and related cloud resources for you.

Get IP Address

After creation, print the public IP address of the admin node:

terraform output | grep -Eo '[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}'

Use the ssh script to automatically configure SSH aliases and distribute keys:

./ssh # Write SSH config to ~/.ssh/pigsty_config and copy keys

This script writes the IP addresses from Terraform output to ~/.ssh/pigsty_config and automatically distributes SSH keys using the default password PigstyDemo4.

After configuration, you can login directly using hostnames:

ssh meta # Login using hostname instead of IP

Using SSH Config File

If you want to use the configuration in ~/.ssh/pigsty_config, ensure your ~/.ssh/config includes:

Include ~/.ssh/pigsty_config

Destroy Resources

After testing, you can destroy all created cloud resources with one click:

Template Specs

Pigsty provides multiple predefined cloud resource templates in the terraform/spec/ directory:

| Template File | Cloud Provider | Description |

|---|

aliyun-meta.tf | Alibaba Cloud | Single-node meta template, supports all distros and AMD/ARM (default) |

aliyun-meta-s3.tf | Alibaba Cloud | Single-node template + OSS bucket for PITR backup |

aliyun-full.tf | Alibaba Cloud | 4-node sandbox template, supports all distros and AMD/ARM |

aliyun-oss.tf | Alibaba Cloud | 5-node build template, supports all distros and AMD/ARM |

aliyun-pro.tf | Alibaba Cloud | Multi-distro test template for cross-OS testing |

aws-cn.tf | AWS | AWS China region 4-node environment |

tencentcloud.tf | Tencent Cloud | Tencent Cloud 4-node environment |

When using a template, copy the template file to terraform.tf:

cd ~/pigsty/terraform

cp spec/aliyun-full.tf terraform.tf # Use Alibaba Cloud 4-node sandbox template

terraform init && terraform apply

Variable Configuration

Pigsty’s Terraform templates use variables to control architecture, OS distribution, and resource configuration:

Architecture and Distribution

variable "architecture" {

description = "Architecture type (amd64 or arm64)"

type = string

default = "amd64" # Comment this line to use arm64

#default = "arm64" # Uncomment to use arm64

}

variable "distro" {

description = "Distribution code (el8,el9,el10,u22,u24,d12,d13)"

type = string

default = "el9" # Default uses Rocky Linux 9

}

Resource Configuration

The following resource parameters can be configured in the locals block:

locals {

bandwidth = 100 # Public bandwidth (Mbps)

disk_size = 40 # System disk size (GB)

spot_policy = "SpotWithPriceLimit" # Spot policy: NoSpot, SpotWithPriceLimit, SpotAsPriceGo

spot_price_limit = 5 # Max spot price (only effective with SpotWithPriceLimit)

}

Alibaba Cloud Configuration

Credential Setup

Add your Alibaba Cloud credentials to environment variables, for example in ~/.bash_profile or ~/.zshrc:

export ALICLOUD_ACCESS_KEY="<your_access_key>"

export ALICLOUD_SECRET_KEY="<your_secret_key>"

export ALICLOUD_REGION="cn-shanghai"

Supported Images

The following are commonly used ECS Public OS Image prefixes in Alibaba Cloud:

| Distro | Code | x86_64 Image Prefix | aarch64 Image Prefix |

|---|

| CentOS 7.9 | el7 | centos_7_9_x64 | - |

| Rocky 8.10 | el8 | rockylinux_8_10_x64 | rockylinux_8_10_arm64 |

| Rocky 9.6 | el9 | rockylinux_9_6_x64 | rockylinux_9_6_arm64 |

| Rocky 10.0 | el10 | rockylinux_10_0_x64 | rockylinux_10_0_arm64 |

| Debian 11.11 | d11 | debian_11_11_x64 | - |

| Debian 12.11 | d12 | debian_12_11_x64 | debian_12_11_arm64 |

| Debian 13.2 | d13 | debian_13_2_x64 | debian_13_2_arm64 |

| Ubuntu 20.04 | u20 | ubuntu_20_04_x64 | - |

| Ubuntu 22.04 | u22 | ubuntu_22_04_x64 | ubuntu_22_04_arm64 |

| Ubuntu 24.04 | u24 | ubuntu_24_04_x64 | ubuntu_24_04_arm64 |

| Anolis 8.9 | an8 | anolisos_8_9_x64 | - |

| Alibaba Cloud Linux 3 | al3 | aliyun_3_0_x64 | - |

OSS Storage Configuration

The aliyun-meta-s3.tf template additionally creates an OSS bucket and related permissions for PostgreSQL PITR backup:

- OSS Bucket: Creates a private bucket named

pigsty-oss - RAM User: Creates a dedicated

pigsty-oss-user user - Access Key: Generates AccessKey and saves to

~/pigsty.sk - IAM Policy: Grants full access to the bucket

AWS Configuration

Credential Setup

Set up AWS configuration and credential files:

# ~/.aws/config

[default]

region = cn-northwest-1

# ~/.aws/credentials

[default]

aws_access_key_id = <YOUR_AWS_ACCESS_KEY>

aws_secret_access_key = <AWS_ACCESS_SECRET>

If you need to use SSH keys, place the key files at:

~/.aws/pigsty-key

~/.aws/pigsty-key.pub

AWS templates may need adjustments

AWS templates are community-contributed examples and may need adjustments based on your specific requirements.

Tencent Cloud Configuration

Credential Setup

Add Tencent Cloud credentials to environment variables:

export TENCENTCLOUD_SECRET_ID="<your_secret_id>"

export TENCENTCLOUD_SECRET_KEY="<your_secret_key>"

export TENCENTCLOUD_REGION="ap-beijing"

Tencent Cloud templates may need adjustments

Tencent Cloud templates are community-contributed examples and may need adjustments based on your specific requirements.

Shortcut Commands

Pigsty provides some Makefile shortcuts for Terraform operations:

cd ~/pigsty/terraform

make u # terraform apply -auto-approve + configure SSH

make d # terraform destroy -auto-approve

make apply # terraform apply (interactive confirmation)

make destroy # terraform destroy (interactive confirmation)

make out # terraform output

make ssh # Run ssh script to configure SSH access

make r # Reset terraform.tf to repository state

Notes

Cloud Resource Costs

Cloud resources created with Terraform incur costs. After testing, promptly use terraform destroy to destroy resources to avoid unnecessary expenses.

It’s recommended to use pay-as-you-go instance types for testing. Templates default to using Spot Instances to reduce costs.

Default Password

The default root password for VMs in all templates is PigstyDemo4. In production environments, be sure to change this password or use SSH key authentication.

Security Group Configuration

Terraform templates automatically create security groups and open necessary ports (all TCP ports open by default). In production environments, adjust security group rules according to actual needs, following the principle of least privilege.

SSH Access

After creation, SSH login to the admin node using:

You can also use ./ssh or make ssh to write SSH aliases to the config file, then login using ssh pg-meta.

8 - Security

Security considerations for production Pigsty deployment

Pigsty’s default configuration is sufficient to cover the security needs of most scenarios.

Pigsty already provides out-of-the-box authentication and access control models that are secure enough for most scenarios.

If you want to further harden system security, here are some recommendations:

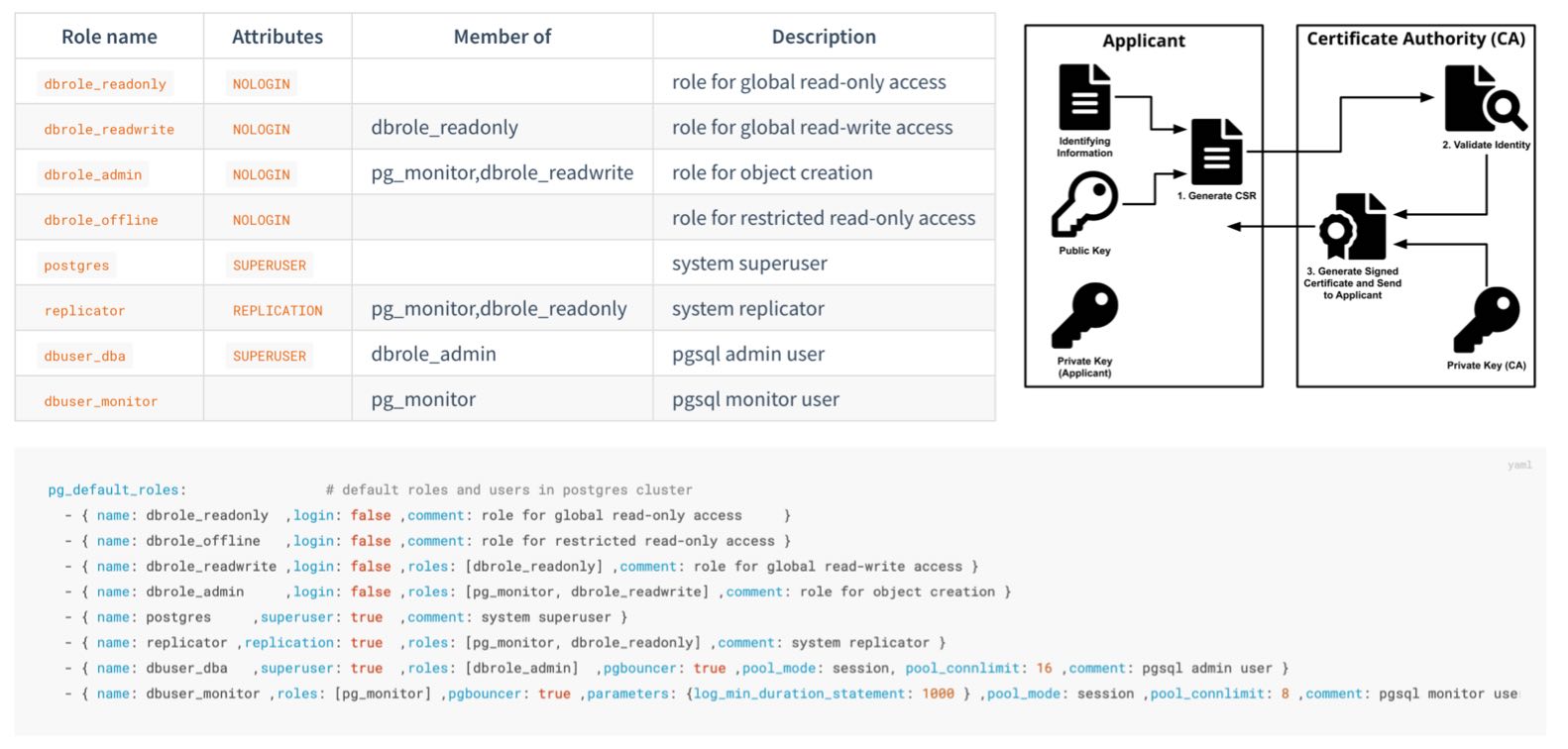

Confidentiality

Important Files

Protect your pigsty.yml configuration file or CMDB

- The

pigsty.yml configuration file usually contains highly sensitive confidential information. You should ensure its security. - Strictly control access permissions to admin nodes, limiting access to DBAs or Infra administrators only.

- Strictly control access permissions to the pigsty.yml configuration file repository (if you manage it with git)

Protect your CA private key and other certificates, these files are very important.

- Related files are generated by default in the

files/pki directory under the Pigsty source directory on the admin node. - You should regularly back them up to a secure location.

Passwords

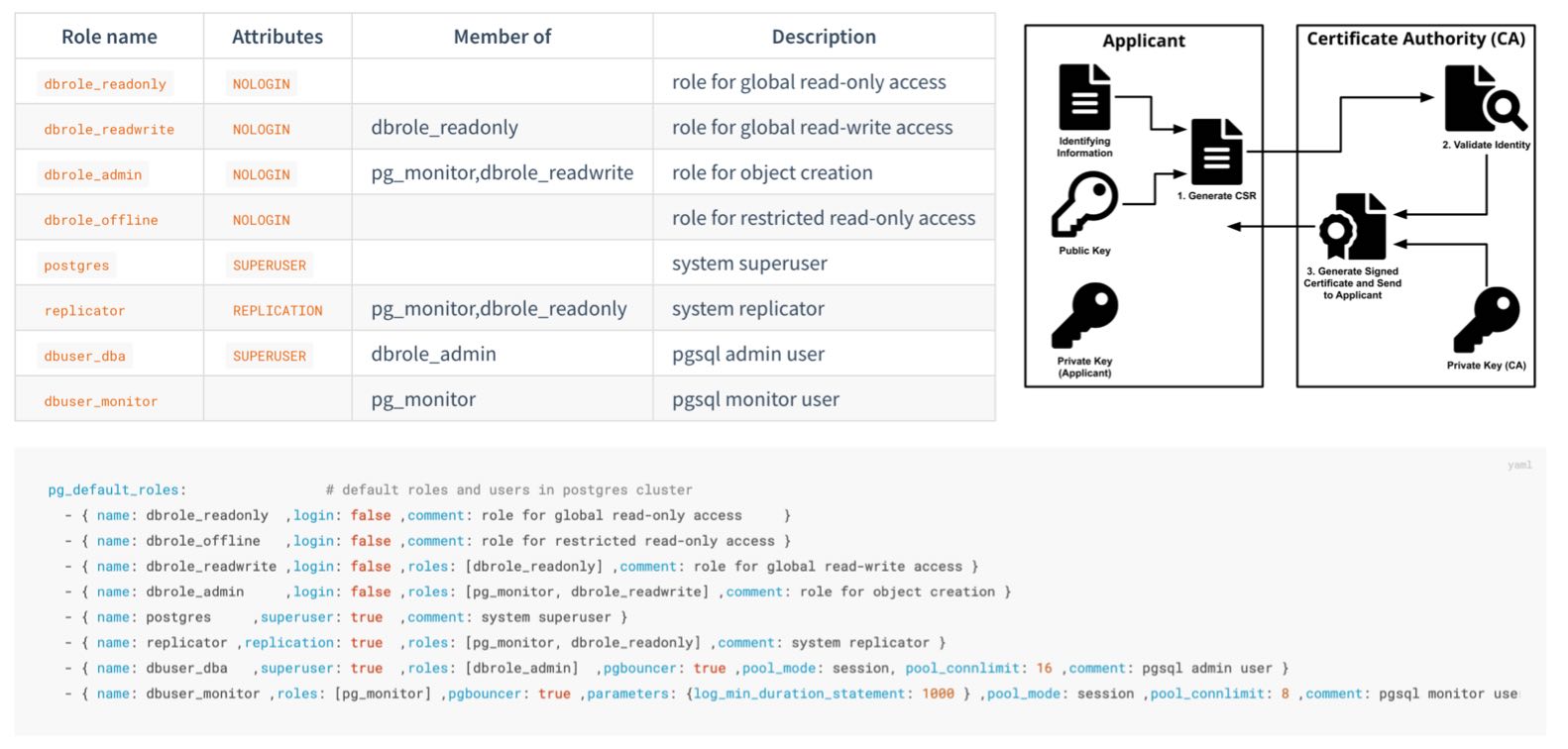

You MUST change these passwords when deploying to production, don’t use defaults!

If using MinIO, change the default MinIO user passwords and references in pgbackrest

If using remote backup repositories, enable backup encryption and set encryption passwords

- Set [

pgbackrest_repo.*.cipher_type](/docs/pgsql/param#pgbackrest_repo) to aes-256-cbc` - You can use

${pg_cluster} as part of the password to avoid all clusters using the same password

Use secure and reliable password encryption algorithms for PostgreSQL

- Use

pg_pwd_enc default value scram-sha-256 instead of legacy md5 - This is the default behavior. Unless there’s a special reason (supporting legacy old clients), don’t change it back to

md5

Use passwordcheck extension to enforce strong passwords

- Add

$lib/passwordcheck to pg_libs to enforce password policies.

Encrypt remote backups with encryption algorithms

- Use

repo_cipher_type in pgbackrest_repo backup repository definitions to enable encryption

Configure automatic password expiration for business users

You should set an automatic password expiration time for each business user to meet compliance requirements.

After configuring auto-expiration, don’t forget to regularly update these passwords during maintenance.

- { name: dbuser_meta , password: Pleas3-ChangeThisPwd ,expire_in: 7300 ,pgbouncer: true ,roles: [ dbrole_admin ] ,comment: pigsty admin user }

- { name: dbuser_view , password: Make.3ure-Compl1ance ,expire_in: 7300 ,pgbouncer: true ,roles: [ dbrole_readonly ] ,comment: read-only viewer for meta database }

- { name: postgres ,superuser: true ,expire_in: 7300 ,comment: system superuser }

- { name: replicator ,replication: true ,expire_in: 7300 ,roles: [pg_monitor, dbrole_readonly] ,comment: system replicator }

- { name: dbuser_dba ,superuser: true ,expire_in: 7300 ,roles: [dbrole_admin] ,pgbouncer: true ,pool_mode: session, pool_connlimit: 16 , comment: pgsql admin user }

- { name: dbuser_monitor ,roles: [pg_monitor] ,expire_in: 7300 ,pgbouncer: true ,parameters: {log_min_duration_statement: 1000 } ,pool_mode: session ,pool_connlimit: 8 ,comment: pgsql monitor user }

Don’t log password change statements to postgres logs or other logs

SET log_statement TO 'none';

ALTER USER "{{ user.name }}" PASSWORD '{{ user.password }}';

SET log_statement TO DEFAULT;

IP Addresses

Bind specified IP addresses for postgres/pgbouncer/patroni, not all addresses.

- The default

pg_listen address is 0.0.0.0, meaning all IPv4 addresses. - Consider using

pg_listen: '${ip},${vip},${lo}' to bind to specific IP address(es) for enhanced security.

Don’t expose any ports directly to public IP, except infrastructure egress Nginx ports (default 80/443)

- For convenience, components like Prometheus/Grafana listen on all IP addresses by default and can be accessed directly via public IP ports

- You can modify their configurations to listen only on internal IP addresses, restricting access through the Nginx portal via domain names only. You can also use security groups or firewall rules to implement these security restrictions.

- For convenience, Redis servers listen on all IP addresses by default. You can modify

redis_bind_address to listen only on internal IP addresses.

Use HBA to restrict postgres client access

- There’s a security-enhanced configuration template:

security.yml

Restrict patroni management access: only infra/admin nodes can call control APIs

Network Traffic

Use SSL and domain names to access infrastructure components through Nginx

Use SSL to protect Patroni REST API

patroni_ssl_enabled is disabled by default.- Because it affects health checks and API calls.

- Note this is a global option; you must decide before deployment.

Use SSL to protect Pgbouncer client traffic

pgbouncer_sslmode defaults to disable- It has significant performance impact on Pgbouncer, so it’s disabled by default.

Integrity

Configure consistency-first mode for critical PostgreSQL database clusters (e.g., finance-related databases)

pg_conf database tuning template, using crit.yml will trade some availability for best data consistency.

Use crit node tuning template for better consistency.

node_tune host tuning template using crit can reduce dirty page ratio and lower data consistency risks.

Enable data checksums to detect silent data corruption.

pg_checksum defaults to off, but is recommended to enable.- When

pg_conf = crit.yml is enabled, checksums are mandatory.

Log connection establishment/termination

- This is disabled by default, but enabled by default in the

crit.yml config template. - You can manually configure the cluster to enable

log_connections and log_disconnections parameters.

Enable watchdog if you want to completely eliminate the possibility of split-brain during PG cluster failover

- If your traffic goes through the recommended default HAProxy distribution, you won’t encounter split-brain even without watchdog.

- If your machine hangs and Patroni is killed with

kill -9, watchdog can serve as a fallback: automatic shutdown on timeout. - It’s best not to enable watchdog on infrastructure nodes.

Availability

Use sufficient nodes/instances for critical PostgreSQL database clusters

- You need at least three nodes (able to tolerate one node failure) for production-grade high availability.

- If you only have two nodes, you can tolerate specific standby node failures.

- If you only have one node, use external S3/MinIO for cold backup and WAL archive storage.

For PostgreSQL, make trade-offs between availability and consistency

pg_rpo : Trade-off between availability and consistencypg_rto : Trade-off between failure probability and impact

Don’t access databases directly via fixed IP addresses; use VIP, DNS, HAProxy, or combinations

- Use HAProxy for service access

- In case of failover/switchover, HAProxy will handle client traffic switching.

Use multiple infrastructure nodes in important production deployments (e.g., 1~3)

- Small deployments or lenient scenarios can use a single infrastructure/admin node.

- Large production deployments should have at least two infrastructure nodes as mutual backup.

Use sufficient etcd server instances, and use an odd number of instances (1,3,5,7)